Lab Grown Neurons Learn to Play Doom

What neurons learning video games tells us about the nature of intelligence.

The brain doesn’t experience the world directly.

It receives electrical signals converted to sensory information from eyes, skin, muscle, and from those signals alone it builds a model of what’s out there. Then it sends other electrical signals to perform functions. This is the loop - perception and action.

Which raises an interesting and uncomfortable question: if you gave a dish of neurons in a lab a synthetic stream of electrical signals, one that responded to their activity the way a body would, would they know the difference?

Researchers tried it.

It turns out there is a precise mathematical law governing how nervous systems respond to that kind of feedback. When you give neurons a world built on that law, something remarkable happens.

They learn.

Scientists have already used this principle to teach neurons to play video games. First Pong. Then Doom.

The company that built this system is called Cortical Labs. Understanding who they are and what they are actually trying to do matters, because the press coverage has mostly gotten the framing wrong.

To understand why, you first have to understand how the brain actually works.

First, if you enjoy these posts, consider subscribing and becoming a part of our growing community!

How the Brain Actually Works

The standard textbook picture of the brain is a camera attached to a processor. Sensory data flows in, gets processed, and produces a response. This picture is wrong, and the evidence against it has been accumulating for decades.

The more accurate model — formalized most rigorously by theoretical neuroscientist Karl Friston at University College London — is that the brain is fundamentally a prediction engine.1,2 At every moment, across every level of its architecture, the brain is generating a model of what should be happening next and comparing that prediction against what actually arrives. The gap between prediction and reality is called prediction error. Minimizing that gap is, under this framework, the organizing principle of all neural computation.

Friston formalized this as the Free Energy Principle: biological systems minimize a quantity called variational free energy, which is mathematically equivalent to the divergence between an internal model of the world and the evidence the system actually receives.1,3 This is not a metaphor. It is a testable mathematical claim about how nervous systems self-organize.

The behavioral extension of this is Active Inference.4,5 The key insight is that you can reduce prediction error in two ways. You can update your internal model to better match reality. Or you can act on the environment to make reality better match your model. Reaching for a glass of water is, under this framework, a self-fulfilling prediction: your motor cortex generates the expectation that your hand will close around the glass, and your muscles fire to make that expectation true. Hunger is a prediction error signal — your body’s model says glucose levels should be higher than they are. Pain is a prediction error signal. Curiosity is a prediction error signal.

This framing has a critical implication for artificial systems — one that is underappreciated in most AI coverage.

Modern large language models and deep neural networks are, in a structural sense, also prediction engines. A transformer predicts the next token. A convolutional network predicts the correct label. The training process minimizes the gap between prediction and ground truth, which is mathematically similar to what the Free Energy Principle describes in biological systems. The resemblance runs deep: many of the researchers who built modern deep learning were drawing, consciously or not, on decades of computational neuroscience.

But there is a critical difference in how the prediction loop closes. In a biological system, prediction error is continuous, embodied, and consequential — the organism acts on the world and receives feedback that is causally linked to what it just did. The loop runs at the timescale of experience. In a standard neural network, the loop closes offline, against a static dataset, during training. After training, the model generates predictions without updating. It no longer has a world; it has a frozen approximation of one.

This architecture has a well-known failure mode that is, in retrospect, entirely predictable. When you show a language model evidence that its answer is wrong, it will sometimes acknowledge the correction and then, a few exchanges later, revert confidently to the original wrong answer. The model is not being stubborn. It is doing exactly what its architecture trains it to do: generate the token sequence most consistent with its internal model of what a correct response looks like. The correction is a new input, but it does not update the underlying weights. The world changed; the model did not. This is, in the vocabulary of Active Inference, a system that can update its outputs but cannot update its model — one half of the prediction-error minimization loop with the other half removed.

Biological neurons, given a world that punishes wrong predictions with unpredictable stimulation, update continuously, in real time — because the cost of staying wrong is immediate and physical.

The DishBrain experiment was, at its core, a test of whether this framework held in a radically minimal system — a single monolayer of cortical neurons with no body, no history, and no prior exposure to anything.

Giving Neurons a World

The experimental setup, described in the 2022 Neuron paper by Brett Kagan and colleagues (including Friston as a co-author), was elegant in its simplicity.6

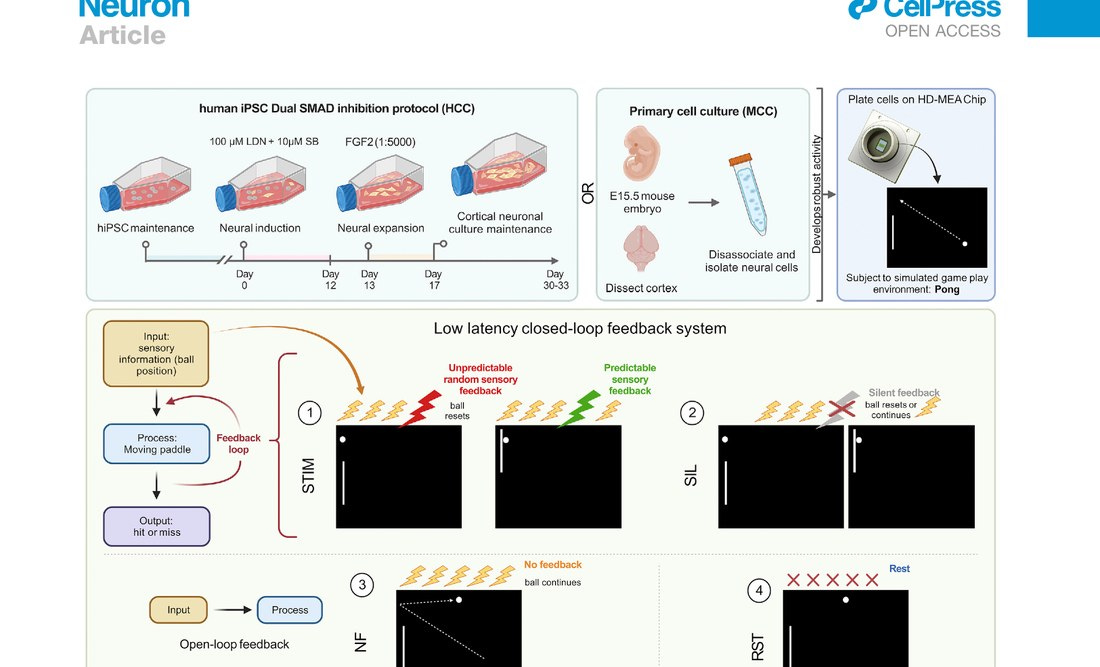

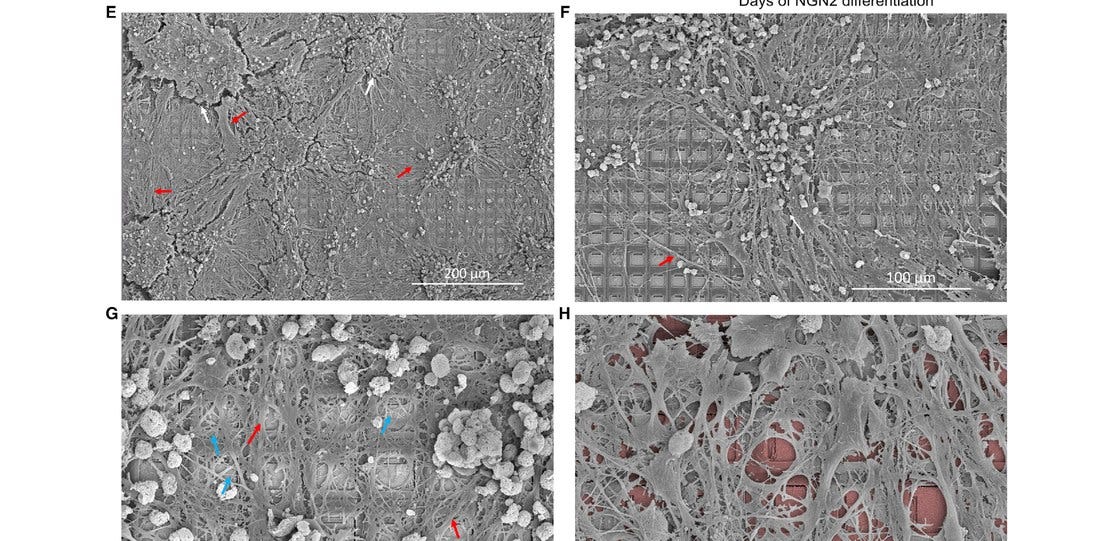

Cortical neurons — derived from either mouse embryos at embryonic day 15.5 or human induced pluripotent stem cells — were cultured onto a high-density multi-electrode array (HD-MEA) containing over 26,000 platinum electrodes across an 8mm² surface. Of these, 1,024 were routed for recording, and 8 were designated as sensory input electrodes. The neurons grew across the chip over several weeks, forming dense interconnected networks. When the cultures reached sufficient electrophysiological maturity — evidenced by synchronized bursting activity — they were connected to a software simulation of Pong.

Ball position was encoded as electrical pulses delivered to the sensory electrodes, using a combined rate and place coding scheme — a method with close biological parallels to how the rodent barrel cortex encodes whisker deflection. Paddle movement was decoded from spiking activity in two designated motor regions: excess activity in motor region 1 moved the paddle up; motor region 2, down. The neurons had no way of knowing what any of this meant. The mapping between their activity and the game world was entirely arbitrary.

The training signal was the implementation of the Free Energy Principle directly. When the paddle missed the ball, the neurons received unpredictable, chaotic stimulation — random pulses at random sites across the 8 input electrodes. When the paddle connected, they received predictable, synchronized stimulation across all electrodes simultaneously. No reward, in the conventional reinforcement learning sense. Just the difference between order and chaos.

The results were published across 399 test sessions and roughly 43 biological replicates. A few things stand out.

First, the learning was fast. Significant improvement in average rally length — the primary performance metric — emerged within five minutes of real-time gameplay. Not five epochs. Five minutes. For context, a standard deep reinforcement learning algorithm trained on the same task takes roughly 90 minutes to reach comparable performance.

Second, the control conditions were rigorous. Cultures receiving sensory information but no feedback showed no learning. Cultures receiving feedback but no sensory information showed no learning. Electrically inactive HEK293T cells showed no learning. The key variable was the closed-loop feedback between neural activity and environmental consequence. Embodiment — in the specific sense of actions having observable consequences — was required.

Third, and perhaps most striking: human cortical cells initially performed worse than mouse cortical cells before reversing to significantly outperform them. In the first five minutes of gameplay, human cortical cells (HCCs) showed lower rally lengths than mouse cortical cells (MCCs) and even the media-only baseline. The authors interpret this initial underperformance as possible exploratory behavior — a period of active sampling of the environment before the system settles on a strategy. By the final 15 minutes, HCCs had reversed the trend and significantly outperformed all control groups. To the authors’ knowledge, this is the first empirical demonstration that human cortical neurons exhibit greater adaptive learning capacity than their rodent counterparts under equivalent conditions. It is a result that had been theorized but never directly tested, and it matters: it is one of the clearest pieces of evidence that human cortical neurons carry genuinely distinct computational properties from what we see in model organisms.

The entropy analysis added a mechanistic layer. The team used information entropy of neural responses as a proxy for the average “surprise” experienced by the culture — an upper bound on variational free energy. As predicted by theory, entropy was lower during gameplay than at rest. Following unpredictable (miss) feedback, entropy spiked. Following predictable (hit) feedback, it did not. The neurons were reorganizing their activity to reduce the unpredictability of their sensory inputs — precisely what the Free Energy Principle predicts.

One important limitation the authors were honest about: between-session learning was not robustly observed. Cultures appeared to relearn associations within each session but did not reliably retain them across sessions. This is consistent with the use of cortical neurons, which are not specialized for long-term memory storage in vivo, and it is a clear direction for future work.

From Pong to Doom

The Pong result established that embodied neurons could learn. The question that followed was whether this was a narrow demonstration or the beginning of something more general.

In early 2025, Cortical Labs upgraded to the CL1, which replaced the earlier electrode architecture with a stable planar array of 59 electrodes at sub-millisecond latency, extended neuron viability to six months, and built a Python API that abstracts the wetware sufficiently that researchers without biology backgrounds can program it directly.

The Doom demonstration arrived shortly after launch, built by independent developer Sean Cole in approximately one week. The core engineering challenge was translation: Doom’s visual stream had to be converted into a language neurons can process, which is electricity. Cole and the Cortical Labs team mapped the game’s video feed into patterns of electrical stimulation delivered across the electrode array. Enemies approaching from the left generated one set of signals; open corridors, another. The neurons received these inputs, processed them through their firing patterns, and the system mapped those patterns back to in-game actions — a specific firing pattern triggers a shot, another moves the character right, another advances forward.

The neurons can navigate toward enemies and shoot at them. Solving the game is a different matter entirely. Doom, at its core, is a puzzle — it requires spatial memory, key-hunting, route planning, and the ability to sequence decisions across time. The CL1’s culture lacks persistent between-session memory and the architectural complexity to hold a map of a level in anything resembling working memory. The character wanders, reacts, and fires, but it does not progress through the game in any strategic sense. It plays like a reflex, not a plan. Brett Kagan acknowledged this directly: the neurons are “learning,” but in the same breath called the result an early-stage proof of the interface — a demonstration that the bridge between biological tissue and digital environment works, with the harder question of gameplay competence still ahead. The Cortical Labs team is explicit that they have “solved the interface problem” — the bridge between digital game state and biological tissue — but that better training methods, encoding schemes, and reward structures are needed before the neurons can do more than react to what is directly in front of them.

The cultural resonance matters here even if the performance benchmarks are modest. “Can it run Doom?” has been the unofficial benchmark for computational legitimacy since the 1990s — a question applied to calculators, tractors, ATMs, and now a dish of living human brain cells. The answer, as of this year, is yes. The more important answer — can it beat Doom — remains, for now, no. That gap between reflex and strategy is precisely the terrain that the next generation of biological computing research will need to cross.

The CL1, the Competition, and What Comes Next

Cortical Labs is not the only organization working in this space, and understanding the competitive landscape puts their position in context.

FinalSpark, a Swiss company, has taken a different approach to neural reinforcement — using dopamine as a chemical reward signal rather than electrical feedback. Kagan has been explicit about why Cortical Labs avoided this: dopamine-based reward is difficult to scale to more complex devices, and the company’s long-term ambition requires an approach that can grow with the architecture. Intel and IBM have both invested heavily in neuromorphic silicon — chips designed to mimic neural computation — but mimicking and implementing are different propositions, and both approaches remain power-hungry relative to biological alternatives. In 2024, Chinese researchers introduced MetaBOC, a brain-on-a-chip platform representing a parallel development track. At Johns Hopkins, Tufts, and Melbourne, organoid intelligence programs are exploring three-dimensional brain organoid structures as computational substrates — cultures with layered architecture closer to in vivo complexity than the flat monolayers of DishBrain.

The field now has a name — Organoid Intelligence (OI) — and funding to match.

Cortical Labs was founded in Melbourne in 2019 by Dr. Hon Weng Chong, a clinician by training who started with a single question: what if the entire field had the problem backwards? The dominant paradigm in AI hardware takes inspiration from the brain and tries to implement it in silicon — neuromorphic chips, spiking neural networks, in-memory computing architectures. Chong looked at that effort, noted that no artificial system had yet matched the brain on its own terms, and asked what nobody seemed to want to ask: what if you just used neurons?

His framing for the CL1 is worth quoting directly. He describes it as a reverse Neuralink. Neuralink’s bet is that you can put a chip inside a brain and use the brain’s neurons to interface with machines. Cortical Labs inverted the proposition: put the brain onto the chip. Give it a body made of silicon and software. Let it compute from there. Cortical Labs raised $11 million from investors including Horizons Ventures, Blackbird Ventures, and In-Q-Tel — the latter being the CIA’s venture arm, which tends to notice when a technology has implications beyond the academic. Their commercial product, the CL1, was announced at Mobile World Congress in Barcelona in March 2025. The first 115 units are shipping this year.

The CL1 itself is a remarkable piece of hardware given how recently this was science fiction. Each unit contains 800,000 neurons grown from iPSCs derived from adult donor skin or blood samples. A proprietary life-support system maintains temperature, nutrients, gas exchange, and waste filtration, keeping the culture viable for up to six months. The biOS — Biological Intelligence Operating System — handles the firmware abstraction between wetware and Python. A rack of 30 units consumes 850–1,000 watts. For comparison, running equivalent AI workloads on conventional GPU infrastructure requires tens of kilowatts. The first 115 units ship at $35,000 each, with rack pricing at $20,000 per unit.

For labs that cannot justify the capital outlay, Cortical Labs offers cloud access at $300 per week per unit — what Chong calls Wetware-as-a-Service, or WaaS. The model is deliberate. Chong has said he wants to bring the cost of biological computing experimentation down to the price of a Zoom subscription. The Python API means a researcher with no background in electrophysiology can deploy code to living neurons without owning a pipette. This is a distribution strategy as much as a pricing strategy: the fastest way to find out what biological computers are actually good at is to let thousands of researchers try things you have not thought of yet.

That openness is already producing unexpected use cases. The obvious applications are in drug discovery and neurological disease modeling — the CL1 provides a human-relevant, animal-testing-free substrate for studying how compounds affect real neural circuits in real time, something no current in vitro model offers with comparable fidelity. A culture can be exposed to a candidate neuropsychiatric drug and its learning dynamics observed directly. Changes in entropy, plasticity, firing patterns — all quantifiable against the same closed-loop gameplay benchmarks used in the DishBrain paper. This alone represents a significant advance over the standard tools of pharmaceutical neuroscience.

But the inbound interest has ranged considerably further. Robotics groups are exploring biological controllers for real-time adaptive movement — the same properties that make neurons good at Pong are also what makes robotic control in unstructured environments hard for conventional systems. Government and defense interest, signaled by In-Q-Tel’s presence on the cap table, likely intersects with autonomous systems and pattern recognition at low power budgets. And then there are the genuinely strange inquiries: Bitcoin miners looking at the energy profile, experimental musicians interested in biological controllers for generative audio, artists exploring what a collaborative project with living neural tissue even means. The technology surface is already wider than the roadmap covers.

The near-term constraint is scale. The current CL1 contains a culture roughly equivalent in neuron count to an insect brain. The Minimal Viable Brain project inside Cortical Labs is working toward organized cultures of around thirty neurons for targeted pattern-recognition tasks — moving from emergent self-organization toward something closer to intentional circuit design. Scaling toward primate-level complexity will require advances in three-dimensional culture architecture, electrode density, and closed-loop learning algorithms not yet at commercial readiness. The ethical framework will need to keep pace: Cortical Labs has embedded bioethicists directly into the development cycle, and the question of at what scale a biological computing substrate might warrant moral consideration is not one anyone in the field is dismissing.

Every new computing substrate — vacuum tubes, transistors, integrated circuits — passed through a period where it worked in principle but not yet at scale. The 2022 paper settled whether the neurons can learn. The open question is whether the architecture can be made reliable, scalable, and manufacturable at a cost that makes the energy and adaptability advantages matter.

The answer is being worked out right now in Melbourne, Lausanne, Baltimore, and Beijing. The CL1 is the first external proof that the process has left the lab.

What Karl Friston said about the CL1 captures the moment accurately: it is the first commercially available biomimetic computer, the ultimate in neuromorphic computing, because it uses real neurons rather than simulating them. Four billion years of evolution produced a learning machine that runs on 20 watts, generalizes from almost nothing, and updates continuously from contact with the world. We have been trying to replicate that in silicon for seventy years. Cortical Labs decided to stop replicating and start deploying.

The first shipments go out this summer. The benchmark is no longer whether biological computers can play Doom. It is what they learn to do next, once researchers with real problems get their hands on them.

Neural NeXus covers the intersection of neuroscience, AI, and the technologies reshaping how intelligence is built. These newsletters take significant effort to put together and are totally for the reader’s benefit. If you find these explorations valuable, there are multiple ways to show your support:

Engage: Like or comment on posts to join the conversation.

Subscribe:

Share: Help spread the word by sharing posts with friends directly or on social media.

References

1. Friston, K. (2010). The free-energy principle: a unified brain theory? Nature Reviews Neuroscience, 11(2), 127–138. doi:10.1038/nrn2787

2. Friston, K. (2009). The free-energy principle: a rough guide to the brain? Trends in Cognitive Sciences, 13(7), 293–301. doi:10.1016/j.tics.2009.04.005

3. Friston, K., Kilner, J., & Harrison, L. (2006). A free energy principle for the brain. Journal of Physiology-Paris, 100(1–3), 70–87. doi:10.1016/j.jphysparis.2006.10.001

4. Friston, K., FitzGerald, T., Rigoli, F., Schwartenbeck, P., & Pezzulo, G. (2017). Active inference: a process theory. Neural Computation, 29(1), 1–49. doi:10.1162/NECO_a_00912

5. Friston, K., et al. (2017). Active inference and learning. Neuroscience & Biobehavioral Reviews, 68, 862–879. doi:10.1016/j.neubiorev.2016.06.022

6. Kagan, B.J., et al. (2022). In vitro neurons learn and exhibit sentience when embodied in a simulated game-world. Neuron, 110(23), 3952–3969. doi:10.1016/j.neuron.2022.09.001

Well, once again, you've prevented me from getting to the rest of my inbox on this rainy Saturday morning. Great piece! And astounding developments in OI. Keep up the great work. Your ability to explain complex concepts like this is wonderful.

That explanation of the difference between brains and LLMs--how the prediction loop closes--is really interesting. Mostly because it validates my contention that LLMs just simulate learning and intelligence, but also because it explains why: because their feedback loops don't run "at the timescale of experience." They don't update in real time. The internal model doesn't change.

With respect to the DishBrain experiment, though, I don't get it. I get the neurons growing on a chip part, but they're "connected to a software simulation of Pong"? How? Physically, I mean. The neurons navigate toward Doom enemies and shoot at them...how? A game that requires spatial memory requires perceiving spatial relationships, which requires senses of touch or vision or both. So without perception, how is there a directed response (output), as opposed to just a preference for an input of order over chaos? Aren't all the other elements of learning absent? Perception, conceptualization, extrapolation, integration, automatizing?

This link https://www.science.org/doi/10.1126/science.adk4858 appeared in another Substack article and I'm not going to pretend to understand the implications of the paper's research. But this plain text summary of it (on X), giving context on the complexity of the human brain, is fascinating:

"The math on this project should mass-humble every AI lab on the planet. 1 cubic millimetre. One-millionth of a human brain. Harvard and Google spent 10 years mapping it. The imaging alone took 326 days. They sliced the tissue into 5,000 wafers each 30 nanometres thick, ran them through a $6 million electron microscope, then needed Google’s ML models to stitch the 3D reconstruction because no human team could process the output. The result: 57,000 cells, 150 million synapses, 230 millimetres of blood vessels, compressed into 1.4 petabytes of raw data. For context, 1.4 petabytes is roughly 1.4 million gigabytes. From a speck smaller than a grain of rice. Now scale that. The full human brain is one million times larger. Mapping the whole thing at this resolution would produce approximately 1.4 zettabytes of data. That’s roughly equal to all the data generated on Earth in a single year. The storage alone would cost an estimated $50 billion and require a 140-acre data centre, which would make it the largest on the planet...One neuron had over 5,000 connection points. Some axons had coiled themselves into tight whorls for completely unknown reasons. Pairs of cell clusters grew in mirror images of each other. Jeff Lichtman, the Harvard lead, said there’s 'a chasm between what we already know and what we need to know.' This is why the next step isn’t a human brain. It’s a mouse hippocampus, 10 cubic millimetres, over the next five years. Because even a mouse brain is 1,000x larger than what they just mapped, and the full mouse connectome is the proof of concept before anyone attempts the human one. We’re building AI systems that loosely mimic neural networks while still unable to fully read the wiring diagram of a single cubic millimetre of the thing we’re trying to imitate. The original is 1.4 petabytes per millionth of its volume. Every AI model on Earth fits in a fraction of that. The brain runs on 20 watts and fits in your skull."