Awakening Machines: The Controversial Science of Conscious AI

A Deep Dive into the Science of AI Consciousness

Foreword and question to Readers:

The question of whether Artificial Intelligence (AI) could ever be conscious is not just a topic for late-night philosophical debates; it's a pressing concern that could have significant ethical and societal implications. I stumbled upon this preprint on arXiv, "Consciousness in Artificial Intelligence: Insights from the Science of Consciousness." Although it was not in my writing lineup, I couldn’t resist reading it and sharing a review with the community. I think this may be particularly timely considering the questions that are arising with increasingly sophisticated Large Language Models (LLMs).

As we consider the future of AI and consciousness, here's a question to spark some thought and discussion.

QUESTION: If we train a large language model on all of your personal data, and it becomes indistinguishable from you to all the people in your life, is that AI conscious? Why or why not?

Keep this question in mind as you read on, and feel free to share your thoughts in the comments section below. I respond to all comments (=

Introduction:

Artificial Intelligence (AI) has been advancing at an unprecedented rate, with some systems now so sophisticated that they can convincingly imitate human conversation and behavior. This level of imitation is often measured by the Turing test, a benchmark in AI research that evaluates a machine's ability to exhibit intelligent behavior indistinguishable from that of a human. As AI continues to pass such benchmarks, we are faced with a pressing and complex question: Can AI ever be conscious?

The concept of consciousness is far from straightforward. Traditionally, consciousness has been considered a uniquely human trait, often described as the state of being aware of and able to think about one's own existence, sensations, and environment. Another view of consciousness defines it as ‘a center of experience’. One of my favorite philosophers, Sam Harris, delves into the concept of consciousness and its nuances (Harris, 2011). Harris argues that consciousness is fundamentally about subjective experience. To use the philosopher Thomas Nagel’s construction: A creature is conscious if there is 'something that it is like' to be this creature; an event is consciously perceived if there is 'something that it is like' to perceive it."

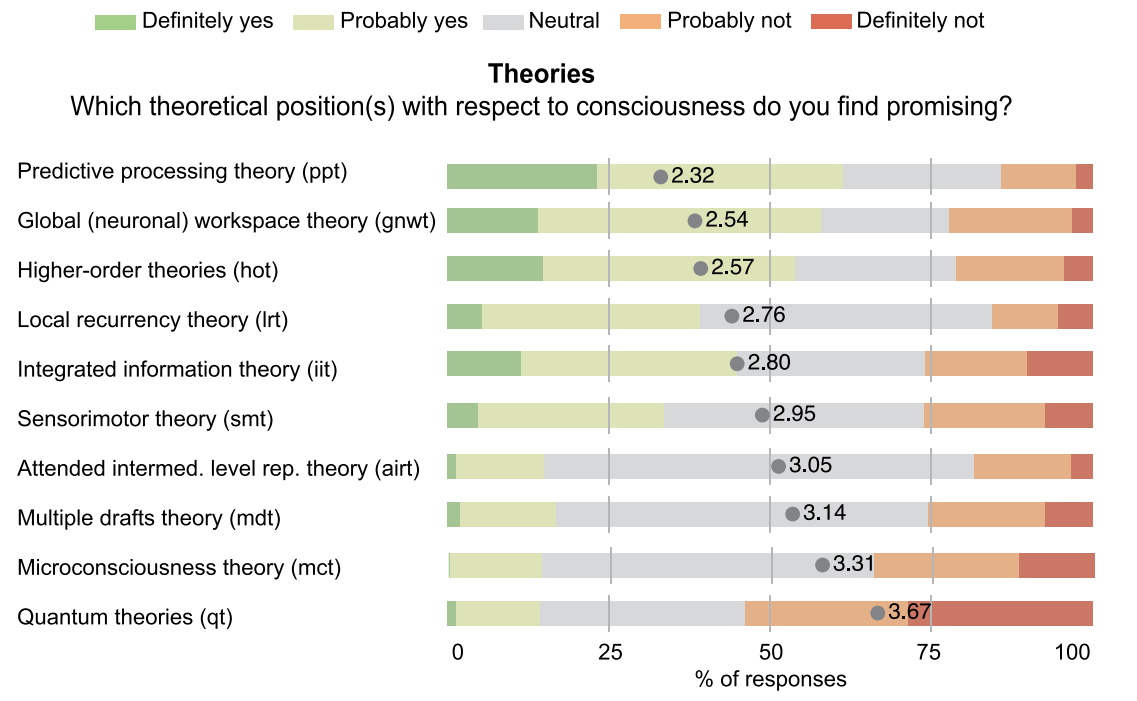

How can something like consciousness be measured, though? Researchers involved in this field of study have attempted to align on this topic through a recent academic survey (Figure 1). The disagreement in the survey makes it clear there remains considerable discussion and debate about what consciousness is and how it should be studied (Francken, 2022).

As AI continues to evolve, the line between machine intelligence and what we consider "consciousness" is becoming increasingly blurred. A recent paper dives deep into this question, providing a framework for assessing consciousness in AI based on neuroscientific theories (Butlin, 2023). My post here aims to unpack the findings and implications of this groundbreaking study.

Report Claim(s):

Note: This is an extremely lengthy article; the PDF is 88 pages, including references. This review only give a brief high-level summary of the information contained within. If you want a more in-depth look, please visit the source attached in the references section.

The article argues that we can make progress in understanding consciousness in AI systems by drawing on neuroscientific theories of consciousness. To this end, the authors develop a comprehensive framework for evaluating the potential for consciousness in AI. It does so by drawing upon well-established neuroscientific theories of consciousness, such as Recurrent Processing Theory (RPT), Global Workspace Theory (GWT), Predictive Processing Theory (PPT), and Integrated Information Theory (IIT).

A few notes about each:

Global Workspace Theory (GWT): This theory has been highly influential and posits that consciousness emerges from the "global broadcast" of information to multiple cognitive modules via a limited-capacity workspace.

Recurrent Processing Theory (RPT): Another significant theory that suggests consciousness arises from recurrent neural activity, allowing for the continuous updating of information.

Predictive Processing Theory (PPT): Gaining traction in recent years, this theory asserts that consciousness involves hierarchical prediction error minimization.

Higher-Order Theories: These theories propose that consciousness requires metacognitive monitoring that distinguishes signal from noise in lower-level systems.

Attention Schema Theory: A more specialized theory that states consciousness depends on a model of attention.

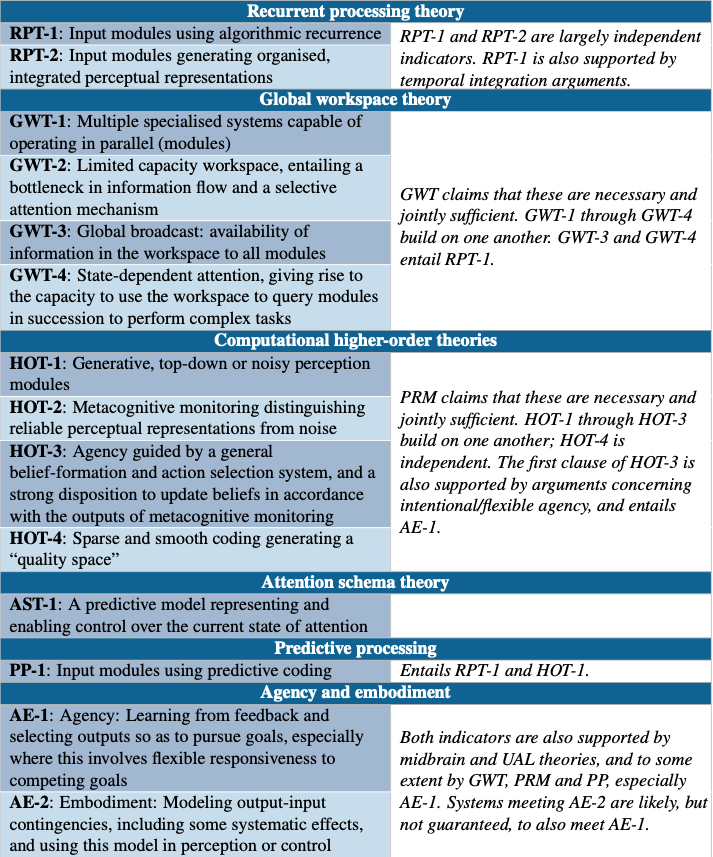

The paper identifies specific "indicator properties" from these theories that could serve as a rubric for assessing whether an AI system could potentially be conscious (Table 1). These indicators include recurrence, global broadcasting, metacognitive monitoring, attention modeling, and prediction error minimization. For example, from RPT, the indicator could be the system's ability to update information in a recurrent loop. From GWT, it could be the system's capacity to make information available to multiple simulated cognitive processes. And from IIT, it could be the level of informational integration within the system.

The paper argues that while no current AI systems meet these criteria for consciousness, there are no inherent technical barriers to creating AI systems that could in the future.

Impact - Why you should care:

The framework proposed in the paper can potentially be a game-changer in the field of AI research. AI training requires specific benchmarks to be measured against in their building and optimization. The development of such specific, measurable criteria for consciousness would be setting the stage for more targeted studies and technological advancements. By providing specific "indicator properties" based on well-established theories of consciousness, the paper offers a roadmap for developing AI systems that could meet these criteria. In other words, we are creating properties of consciousness that could be input as parameters when new AI systems are trained. This could revolutionize AI and our ethical considerations regarding machine treatment.

Is this real - a critical look:

There are several reasons to approach the claims with a high degree of skepticism.

Lack of Universal Definition. The concept of consciousness itself is a subject of ongoing debate and lacks a clear, universally accepted definition. Definitions could vary widely depending on the field—philosophy, biology, or engineering.

Preprint Status. It's important to note that the paper is a preprint and has not yet undergone peer review. This means it hasn't been scrutinized by experts in the field, which is a crucial step in academic research (as we found out in our review of LK-99).

Lack of Empirical Evidence. The paper provides a theoretical framework but does not offer empirical evidence to support the idea that AI can be conscious. Theoretical frameworks are not definitive proof and should be treated as starting points for further research. This paper reads closer to a review or prospectus than original research.

Potential for Incorrect Indicators. Even assuming that consciousness can be measured, the paper's approach relies on "indicator properties" derived from existing theories of biological systems. If incorrect indicators are selected, or are not appropriate because of some differences in substrate (e.g., biological vs silicon), this could lead to a garbage-in-garbage-out scenario, undermining the validity of any conclusions.

Applicability to Artificial Systems: The notion of "indicator properties" assumes that theories of consciousness developed for biological systems are fully applicable to artificial ones. Given our limited understanding of consciousness even in biological systems, this is an enormous leap.

Conclusions and Next Steps:

The paper makes a solid review of the neuroscientific theory of consciousness and synthesizes them into a structured framework for evaluating the potential for consciousness in AI. The paper is both extremely interesting and timely (although a lot to digest). However, calling this ‘controversial’ would be a huge understatement. This likely is closer to a starting point rather than a conclusive rubric for answering questions related to AI consciousness.

Future Relevance. As AI systems become increasingly advanced, the question of their potential consciousness will become more and more relevant. We're already seeing the emergence of AI girlfriends on websites, which raises questions about the ethical treatment of such entities. The paper's framework provides a timely foundation for these pressing issues.

Future Research. The framework opens up avenues for further empirical studies to test the "indicator properties" in real-world AI systems. Such research could either validate or challenge the paper's theoretical claims, providing a more robust understanding of the potential for consciousness in AI.

Ethical and Legal Discussions. The paper's framework raises intriguing but complex ethical and legal questions. If AI systems could potentially be conscious, what rights should they have? Could they own property, or should they be granted some form of autonomy? These are not just theoretical questions; they could have real-world implications that challenge our current legal and ethical frameworks. However, it's important to note that any such discussions should be grounded in empirical evidence, which is currently lacking. Furthermore, do we even want conscious AI? Is this safe?

Peer Review and Academic Scrutiny. As the paper is a preprint, it will be important to follow its progress through the peer-review process, as this will provide additional layers of scrutiny and potentially refine its claims and framework.

How you can support this Substack

If you find these explorations valuable, there are multiple ways to show your support:

Subscribe: Never miss an update by subscribing to the Substack.

Subscribed

Engage: Like or comment on posts to join the conversation.

Share: Help spread the word by sharing posts with friends or on social media.

References:

Butlin, P., Long, R., Elmoznino, E., Bengio, Y., Birch, J., Constant, A., Deane, G., Fleming, S.M., Frith, C., Ji, X. and Kanai, R., 2023. Consciousness in Artificial Intelligence: Insights from the Science of Consciousness. arXiv preprint arXiv:2308.08708.

Francken, J.C., Beerendonk, L., Molenaar, D., Fahrenfort, J.J., Kiverstein, J.D., Seth, A.K. and van Gaal, S., 2022. An academic survey on theoretical foundations, common assumptions and the current state of consciousness science. Neuroscience of consciousness, 2022(1), p.niac011.

Sam Harris. The Mystery of Consciousness. Accessed 04SEP23. https://www.samharris.org/blog/the-mystery-of-consciousness.

Vivek Naskar. An AI Girlfriend Makes $72K In 1 Week. Accessed 04SEP23. https://levelup.gitconnected.com/an-ai-girlfriend-makes-72k-in-1-week-38fbe5263523

If we are talking about neurons, the human brain has ~90 billion vs. the most sophisticated AI model a couple of hundred million. Not even close, microscopic in scale compared to us and we don’t even fully understand the human brain and may very well never be able to (unless we evolve?).

Still, I can see us being able to create the illusion of artificial consciousness through elaborate programming. Interesting times!

NB: (you may know this one already) On AI and consciousness: https://www.youtube.com/watch?v=hXgqik6HXc0

I think the problem with AI having consciousness is that it can only simulate emotion unlike humans. Even if it "chooses" to become as dangerous as we predict, it won't do it because it's conscious, but because somewhere in it's own algorithm, it decided to do the best course of action for whatever problem we give it. No remorse, no happiness or sadness, just pure, calculated execution of code. I don't think we'll ever be able to mathematically create something that replicates the human experience of emotion, however, everything we create can be almost perfectly simulated.